In an era where digital artistry thrives, safeguarding creative works from unintended AI use has become a priority for many artists. Enter Glaze and Nightshade—a duo of tools empowering digital artists to shield their creations from being utilized in AI training processes.

Drawing is hard. As an amateur artist, it usually takes me a few hours just to finish one bust-up drawing in an anime style. And don’t get me started on how the result of my drawing sometimes does not match what I have in mind.

Sometimes, I wish that there were a machine that could take all the ideas I have in my mind and turn them into a drawing. Generative AI is the closest thing to the machine I dream of. From an artist’s perspective, though, the existence of Generative AI feels like asking a morally bankrupt genie for a wish.

Checkout Another Post: NFT Market Crashed, 95% of NFT Collections are Worthless

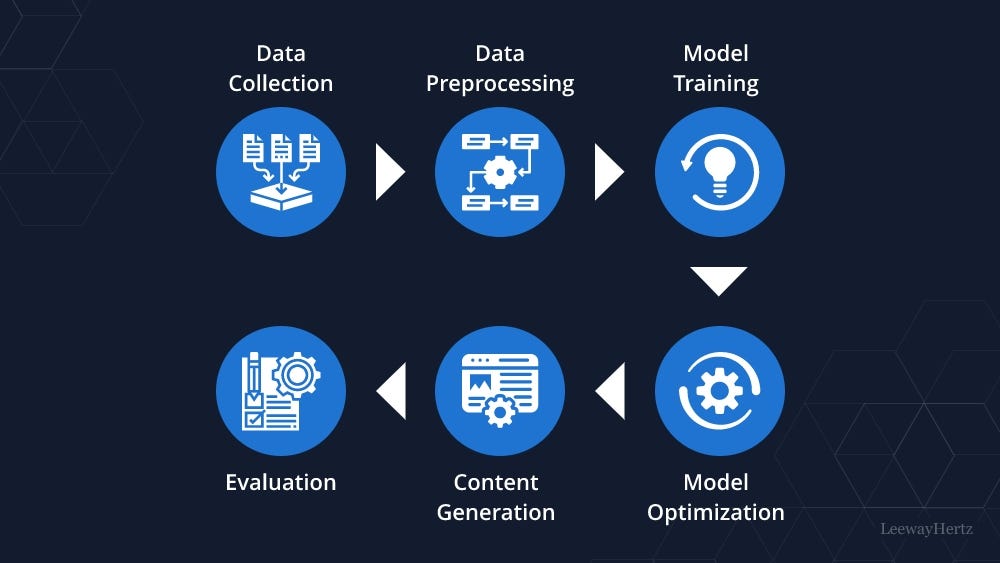

You see, Generative AI can only generate a picture after it has been fed hundreds of thousands, or even millions, of drawings as training; pictures that were made by humans and sometimes are protected by copyright. It might not be a big issue if only AI developers are willing to compensate the artists for their artworks. But they are not. Sometimes, artists’ work is even scrapped for AI training without their consent.

And when artists raise concerns about the ethical and legal use of Generative AI, they are told to adapt or die. It makes artists feel the need to protect their works, a way to prevent their work from being used to train AI models.

This is where Glaze and Nightshade come in. Both Glaze and Nightshade have the same purpose: to help artists protect their works from being scrapped to train AI models. To MIT Technology Review, Ben Zhao, a Professor at the University of Chicago and the leader of the team that created Glaze and Nightshade, explains that Glaze is a tool that can help artists “mask” their styles. Nightshade has the same function, except this tool can also “poison” the dataset for AI training.

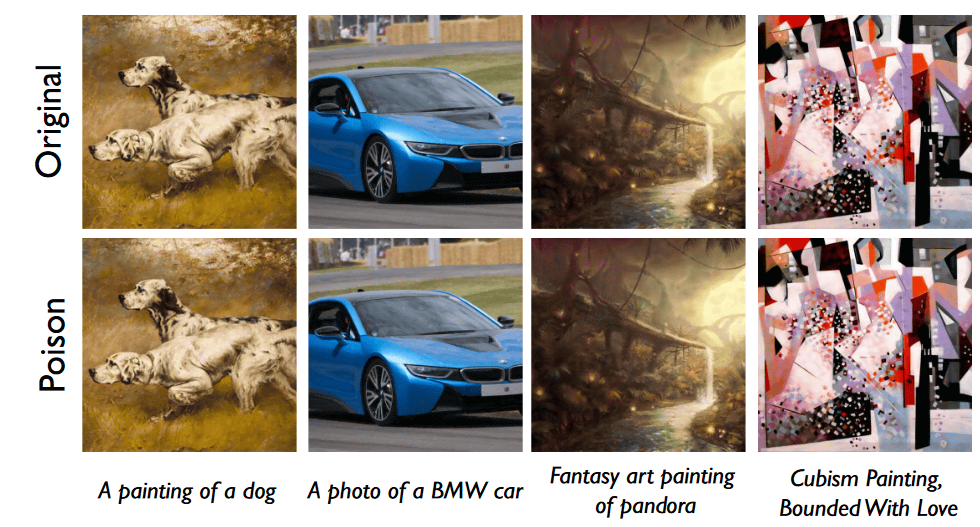

The way Glaze and Nightshade work is similar. Both of these tools will change the pixels of an artwork so an AI model cannot accurately perceive the objects depicted in it. But, these changes are so subtle that the artwork will look unchanged to human eyes.

The team behind Glaze and Nightshade plans to integrate the latter into the former. Ultimately, though, the choice to use Nightshade to protect an artwork is in the artist’s hands. One thing is for sure, Zhao points out; the more people use Nightshade in their artwork, the more potent the poison will be.

How Nightshade Works

For an AI model to generate a picture of an object, it first needs to be able to identify said object. That is why data samples need to be labelled correctly, so an AI model can recognize cats as cats and dogs as dogs. Nightshade can make an AI model perceive an object as something else. For example, a hat as a cake, a cow as a car, etc. The more poisoned samples fed to an AI model, the more wonky the results the AI produces.

To know the effectiveness of Nightshade, the researchers tested it on an AI model they created and the latest models of Stable Diffusion. They tried to feed Stable Diffusion with 50 poisoned images of dogs and voila! When the AI model is prompted to generate a picture of a dog, it generates creatures with too many limbs instead. Meanwhile, when the researchers trained the AI model with 300 poisoned data samples, they even managed to get the AI to confuse dogs with cats.

Nightshade works not only on labels of objects but also on the art style. So, when a prompter asks an AI to generate a drawing in a certain style, it will come up with another instead. For example, if someone asks for a cartoon drawing, the AI might come up with the drawing of 19th-century impressionists’ style instead, as reported by Gizmodo.

Checkout Another Post: Misleading and Deceptive Contents in the Digital Age: Causes and Consequences

As any other technology, Nightshade can be abused by bad actors, Zhao realizes this. But, he believes poisoning a larger AI model is not easy, for a larger AI models are trained with billions of data samples. Besides, he thinks the existence of tools such as Glaze and Nightshade will help artists to protect their artworks and have stronger bargaining power against AI developers who want to use their works as data samples.

Limitations of Nightshade

AI developers, such as Google, Meta, and OpenAI, have been sued by artists for using works with copyright to train their AIs without consent. Before Glaze and Nightshade, the only way for artists to avoid having their work used to train an AI was to opt out of having their art being harvested to train an AI. But, Eva Toorenent, an artist who uses Glaze, believes it was not enough. Because artists still have to do all the work while the AI companies still have power over artists. Zhao, leader of the team behind Glaze and Nightshade, says that these two tools hopefully will give artists power to fight against AI companies.

Meanwhile, Junfeng Yang, Computer Science Computer, Columbia University believes that Nightshade will have a big impact by making the AI companies respect artists’ rights more. Hopefully, in the end, these AI companies will be more willing to pay artists royalties if they want to use their works to train AI.

Gautam Kamath, Assistant Professor at the University of Waterloo, also calls Nightshade “fantastic.” Because it shows that AI models have vulnerabilities that will not simply disappear, if anything, Kamath says that as time goes by and people’s trust in AI rises, it will also make the stakes higher.

Unfortunately, Glaze and Nightshade cannot be used to poison Generative AIs that already exist, such as MidJourney and DALL-3. For those AIs have been trained with artists’ old artworks. But, tools like Glaze and Nightshade give artists hope that their work will not be used without their consent.

Autumn Beverly, an artist, says that now that she has tools like Glaze and Nightshade to protect her works, she is posting her arts online again. Before this, she admits that she removed her art from the internet once she knew that they were being put into the LAION image database without her consent.

Header: NPR