For years, we’ve been arguing about how smart AI is. Wrong debate. The real question was always: Can it actually do the work?

With GPT-5.5, that question finally has an uncomfortable answer:

Yes… more often than we’re ready for.

This isn’t just a smarter chatbot. It’s not even just a better model. GPT-5.5 marks a subtle but important shift—from something that responds to something that operates. And once you see it that way, everything about its upgrades starts to make sense.

From “Tell Me” to “Handle It”

Older models behaved like very knowledgeable interns.

You ask a question, they answer.

You ask for help, they suggest.

You ask for a plan, they give you bullet points—and then politely disappear.

GPT-5.5 doesn’t disappear. It stays.

It plans, executes, checks, and sometimes even corrects itself mid-process. Not perfectly, not magically—but enough to feel different.

Think of it like this:

- GPT-4 → “Here’s how you should do it.”

- GPT-5 → “Here’s a structured approach.”

- GPT-5.5 → “I’ll start doing it. Tell me when to stop.”

That shift—from advice to action—is the real upgrade.

Checkout my other article: AI and Humans: The Third Relationship

The Rise of Agentic Behavior (Or: The AI That Doesn’t Wait for You)

One of the biggest changes in GPT-5.5 is something called agentic reasoning. Sounds fancy. It just means: The AI can break a problem into steps, execute them, and loop back if something goes wrong.

In the real world, that looks like this:

Scenario: You’re building a feature for a product

Before:

- You ask for code

- It gives you code

- You debug it

- You ask again

- Repeat until mild emotional damage

Now:

- You describe the feature

- GPT-5.5:

- Breaks it into components

- Writes the code

- Simulates edge cases

- Suggests improvements

- Fixes obvious bugs before you even complain

It’s not just faster. It removes entire layers of friction. Suddenly, the bottleneck isn’t “writing code”—it’s deciding what to build.

Coding: From Assistant to (Almost) Teammate

Let’s be honest—AI coding tools have been “impressive” for a while.

Also annoying.

They’re great at generating code… and equally great at generating slightly wrong code that wastes your afternoon.

GPT-5.5 improves this in a way that actually matters:

- Better understanding of large codebases

- Stronger debugging logic

- More consistent structure across files

- Fewer “this looks right but breaks everything” moments

Real-world example:

A startup founder wants to build an internal dashboard.

Before:

- Needs a developer

- Or spends weeks duct-taping tutorials

Now:

- Describes the dashboard

- GPT-5.5:

- Suggests architecture

- Builds backend logic

- Generates UI components

- Connects APIs

- Flags potential scalability issues

You still need a human in the loop. But the loop is now… much shorter.

Knowledge Work Is Quietly Getting Automated

This is where things get interesting—and slightly existential. GPT-5.5 isn’t just better at coding. It’s better at knowledge work.

Not the flashy stuff. The real stuff:

- Research synthesis

- Data interpretation

- Report drafting

- Strategy outlines

- Workflow automation

Scenario: Writing a market report

Before:

- Read 20 sources

- Extract insights

- Build narrative

- Double-check inconsistencies

Now:

- Feed sources

- GPT-5.5:

- Identifies patterns

- Highlights contradictions

- Builds a structured narrative

- Suggests angles you didn’t think about

It’s not replacing expertise. But it’s compressing the time it takes to use that expertise.

Scientific Thinking: Not Just Explaining, But Exploring

One of the more surprising upgrades is how GPT-5.5 handles scientific and technical reasoning.

It’s no longer just summarizing research—it can:

- Form hypotheses

- Analyze datasets

- Suggest experimental approaches

- Iterate on conclusions

Example:

A researcher is exploring a pattern in biological data.

Instead of:

- Running analysis → interpreting manually

They can now:

- Run analysis → let GPT-5.5:

- propose explanations

- test assumptions

- suggest next steps

It doesn’t replace scientists. But it amplifies curiosity. And that might be more dangerous—in a good way.

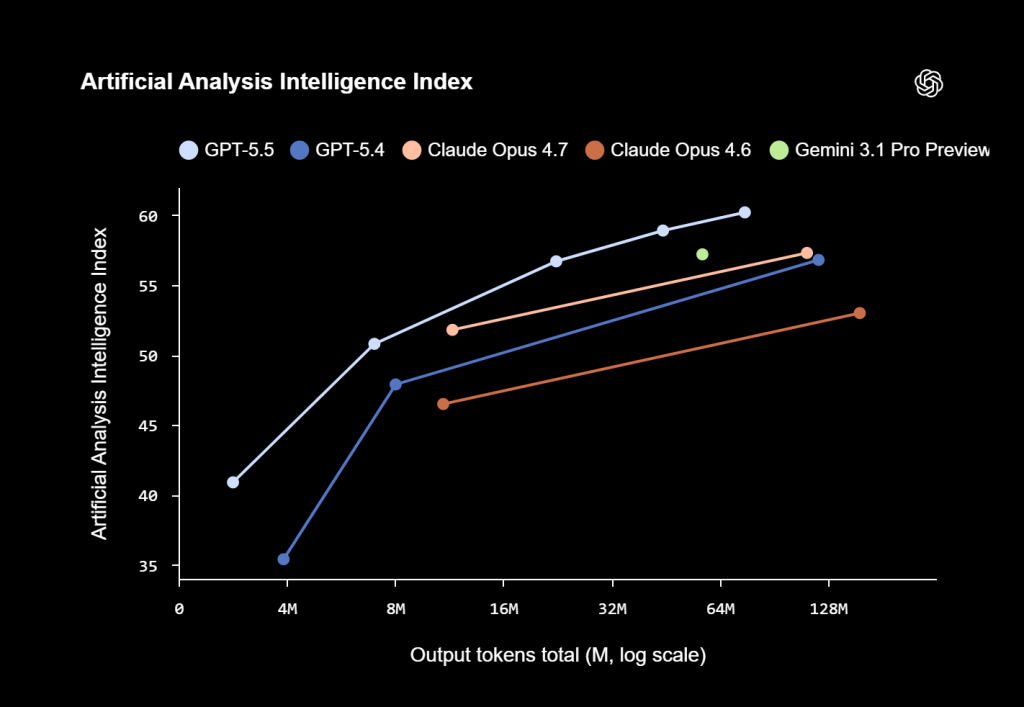

Efficiency: The Upgrade You Don’t Notice (But Should)

Here’s the least exciting upgrade—and arguably the most important:

GPT-5.5 is more efficient.

- Uses fewer tokens

- Maintains similar speed

- Handles more complex tasks without “thinking forever”

Why does this matter? Because intelligence that’s too expensive doesn’t scale. Efficiency is what turns “cool demo” into “daily workflow.”

It’s the difference between:

- Using AI occasionally

- Building your entire process around it

It Thinks Better, Not Just Harder

Another subtle shift: GPT-5.5 doesn’t just produce better answers—it behaves better during thinking.

- It structures responses more clearly

- It catches its own mistakes more often

- It revisits earlier steps if something feels off

Example:

You ask for a business strategy.

Before:

- You get a clean-looking plan that falls apart in execution

Now:

- You get:

- A plan

- Risk factors

- Trade-offs

- Alternative approaches

It feels less like a confident guess—and more like an internal discussion.

Persistent Context: The End of “Wait, What Were We Doing?”

Older models had the attention span of a goldfish with a caffeine problem.

GPT-5.5 is better at:

- Staying on track

- Remembering earlier constraints

- Revisiting decisions

Scenario:

You’re working on a long project:

- Brand strategy

- Then content plan

- Then campaign execution

Before:

- You constantly re-explain context

Now:

- The model actually follows the thread

It doesn’t just answer questions—it continues the work.

So… What Changed, Really?

If you strip away the technical jargon, GPT-5.5 introduces three real shifts:

1. It executes, not just responds

It doesn’t stop at ideas—it moves toward outcomes

2. It operates in loops

Plan → act → check → refine

(Like a human who had coffee and accountability)

3. It behaves like a system, not a tool

It combines reasoning, tools, and structure into something closer to a workflow engine

The Slightly Uncomfortable Truth

Here’s the part people don’t always say out loud: GPT-5.5 doesn’t replace humans.

But it compresses the distance between idea and execution so much that:

- The value of “just doing tasks” drops

- The value of “deciding what matters” increases

In other words:

The bottleneck is no longer skill. It’s judgment.

Final Thought: The Quiet Shift

There was no dramatic moment. No “AGI achieved” headline. No sci-fi breakthrough. Just a gradual realization: The AI isn’t waiting for instructions anymore.

It’s starting to work alongside you. And sometimes—just slightly ahead of you. Which is exciting.

And, depending on your job…

just a little bit terrifying.