It’s Not the Intelligence. It’s the Incentives.

For a technology that promises progress, artificial intelligence has managed to generate an unusual amount of resentment.

Not confusion. Not simple fear. Resentment.

Scroll through comment sections, forums, or social feeds, and the phrase “I hate AI” appears again and again. It’s tempting to dismiss this as technophobia or a failure of education. But that explanation is lazy—and wrong.

People don’t hate AI because they don’t understand it.

They hate it because they understand how it’s being used.

Check out my other article: Human vs AI: How Embracing GenAI is Evolving Us into Better Humans

The backlash is not about neural networks or transformers. It’s about trust, incentives, and a growing sense that AI is being deployed for companies, not for people. When you look closely, the frustration clusters around five recurring themes.

1. Overused Without Meaning: When “AI” Becomes a Punchline

The first crack in public trust isn’t technical. It’s linguistic.

“AI” has become a marketing word—slapped onto everything from search features to note-taking apps to household products, regardless of whether meaningful artificial intelligence is actually involved. Many of these systems rely on basic automation, heuristics, or long-standing statistical methods, yet are rebranded as “AI-powered” to ride the hype wave.

This phenomenon is now widely known as AI washing: overstating or misrepresenting AI capabilities for commercial advantage. It’s no longer just an internet joke. Regulators, including the U.S. Federal Trade Commission, have explicitly warned companies that there is no “AI exemption” from consumer protection law. If a claim is misleading, adding the word AI does not make it acceptable.

The effect on the public is predictable. When everything is labeled AI, the word stops meaning anything. Worse, it erodes trust in the moments where AI is genuinely transformative.

This mirrors older tech hype cycles. Years ago, “gaming” was stamped on products that had little to do with performance and everything to do with RGB lighting. Eventually, the label became shorthand for empty marketing. Today, “AI” risks the same fate.

Once users feel they’re being sold a buzzword instead of a benefit, skepticism becomes the default.

2. Job Anxiety Isn’t Ignorance—It’s a Rational Response to Conflicting Reality

Few fears are as emotionally potent as the fear of economic irrelevance.

The conversation around AI and jobs is often framed as binary: either AI will massively boost productivity, or it will destroy employment. The reality is more uncomfortable—and more confusing.

Research shows conflicting results, depending on context.

In some environments, AI clearly improves productivity. Field studies in customer support show measurable gains, especially for less experienced workers, helping them perform closer to senior peers. In these cases, AI acts as a scaffold.

But other research tells a very different story. A controlled study by METR found that experienced open-source software developers actually became slower—by a meaningful margin—when using advanced AI tools on projects they already understood well. Even more striking: the developers believed they were faster, despite the data showing otherwise.

That gap between perception and reality is crucial.

Most people—including many executives—don’t have a nuanced understanding of what AI can and cannot do. This leads to a dangerous assumption: that AI is inherently cheaper and more efficient than skilled human labor.

In practice, AI is a tool. And tools amplify the skill of the user.

An amateur with AI is still an amateur—just faster at making mistakes. In writing, for example, generative models tend to produce safe, average output. Without a journalist’s judgment, context, or narrative instinct, the result is often bland, generic, and interchangeable. Technically correct, perhaps—but empty.

The resentment grows when companies treat AI as a replacement for expertise rather than an extension of it. Workers don’t just fear job loss; they fear being misunderstood—reduced to a cost line that management believes a model can approximate.

That’s not fear of technology. That’s fear of disrespect.

Check out my other article: AI Didn’t Kill Journalism—Humans Did

3. Creator Backlash, “AI Slop,” and the Junk Content Spiral

If there’s one place where public frustration becomes visceral, it’s content.

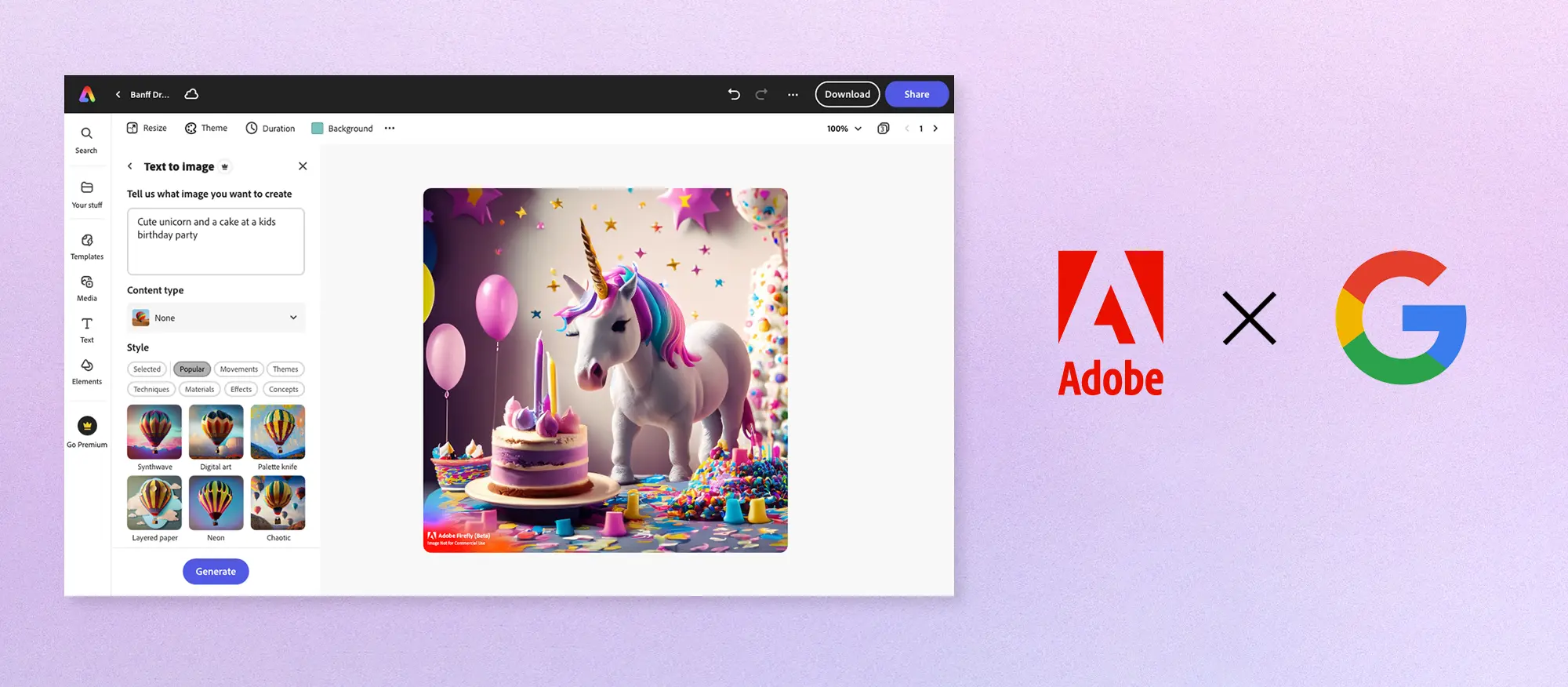

Generative AI has made it trivially easy to produce text, images, and videos at massive scale. The result is what many now call “AI slop”: low-quality, mass-produced content that floods platforms faster than humans can filter or evaluate it.

The cultural signal is loud. “Slop” was named a Word of the Year not because people suddenly became elitist, but because they feel overwhelmed—buried under an avalanche of synthetic sameness.

But here’s where nuance matters.

This problem did not begin with AI.

Long before generative models, media systems rewarded sensationalism, emotional hooks, and easy consumption. Most people did not read scientific journals; they read tabloids. They still do. The incentive structure—attention over substance—has existed since the era of print media.

AI didn’t invent that loop.

It industrialized it.

Generative models simply lowered the cost of feeding an appetite that already existed. Humans choose to deploy AI this way. Humans choose to reward it with clicks, likes, and shares.

Blaming AI alone misses the deeper issue: platforms optimize for engagement, not meaning. AI just accelerates whatever that system rewards.

There’s another layer of unease here. Research increasingly suggests that generative models tend toward regression to the mean: safe language, familiar structures, average ideas. That’s why AI-generated articles often feel hollow. They reflect the statistical center of their training data, not insight.

So when creators see their work used to train models that then produce endless generic imitations—and sometimes compete with them commercially—the backlash isn’t just emotional. It’s existential.

4. Environmental Costs and Consumer Sacrifice: When AI Gets Physical

For a long time, AI felt abstract—something that lived in the cloud.

That illusion is gone.

Training and running large AI models requires vast amounts of electricity and water. Recent research and investigative reporting have begun to quantify this footprint, translating it into carbon emissions, water consumption, and grid strain. Suddenly, AI is no longer just a software debate; it’s an infrastructure problem.

And here’s where resentment sharpens: the costs are socialized, while the benefits are concentrated.

Data centers consume resources in regions already under environmental stress. Communities feel the pressure through higher energy demand and water usage. At the same time, supply chains shift to prioritize AI infrastructure over consumer needs.

This is where your personal observation fits powerfully.

In the hardware world, we can see opportunity cost in real time. Memory manufacturers increasingly prioritize high-bandwidth products for AI data centers. Consumer brands are shut down or deprioritized. Analysts warn that mid-range laptops may regress to lower RAM configurations, and smartphones could even move backward in memory tiers—not because technology can’t do better, but because supply is being redirected.

The frustration compounds in the GPU space.

Based on long-form technical reviews and community discussions—on channels like Gamers Nexus and platforms such as Reddit and Steam—a consistent sentiment emerges: users still care deeply about raw rasterization performance. AI-based features like upscaling and frame generation are welcomed as enhancements, not replacements.

Frame generation is appreciated when a GPU is already strong. It is resented when it feels like a crutch—used to mask weak baseline performance or justify stagnation elsewhere.

This is not an anti-AI stance. It’s a trust issue.

When consumers feel that AI priorities are degrading the core experience—while marketing insists everything is “better than ever”—resentment is inevitable.

5. The Broken Promise: “AI Will Help Everything”… So Why Doesn’t It?

The final source of frustration is perhaps the most corrosive: the gap between promise and reality.

AI is sold as a universal helper—one that will improve healthcare, education, creativity, productivity, and daily life. Yet for most people, the most visible impact so far has been… more content.

AI writes posts. AI generates images. AI produces videos. Often at enormous computational cost, and often with questionable value.

Meanwhile, AI video generation remains expensive, resource-intensive, and far from reliable. Many of the truly transformative applications remain experimental, localized, or inaccessible.

This disconnect makes more sense when you look at the economics.

According to reporting based on Bain estimates, AI companies may need to generate around $2 trillion in annual revenue by 2030 to justify the infrastructure spending required to build this future. That number is staggering—larger than the combined recent revenues of several tech giants.

This creates immense pressure to monetize quickly.

When revenue targets are that high, AI can’t be optional. It must be bundled. Defaulted. Forced into workflows. Marketed as inevitable. Whether it’s ready or not.

From the outside, this looks less like progress and more like desperation. People sense that they are being asked to adapt—not because AI clearly improves their lives, but because the industry needs them to.

And that’s where belief breaks.

The Real Conclusion: People Don’t Hate AI. They Hate Being Treated as Inputs.

Taken together, these five forces tell a consistent story.

- AI is overhyped, diluting trust.

- Productivity gains are uneven, but belief in AI’s cheapness spreads faster than evidence.

- Generative tools accelerate an already-broken content incentive loop.

- Environmental and supply-chain costs are increasingly visible to everyday consumers.

- The economic pressure to monetize AI pushes it into places where it isn’t ready—or welcome.

This is not a rejection of intelligence, automation, or innovation.

It’s a rejection of a system where technology is deployed primarily to extract value—attention, labor, data, money—while the costs are borne by everyone else.

If AI wants legitimacy, it won’t earn it through better demos or louder marketing.

It will earn it through honesty, restraint, accountability, and proof that its benefits extend beyond balance sheets.

Until then, the resentment will remain—not because people hate the future, but because they don’t like how this one is being built.